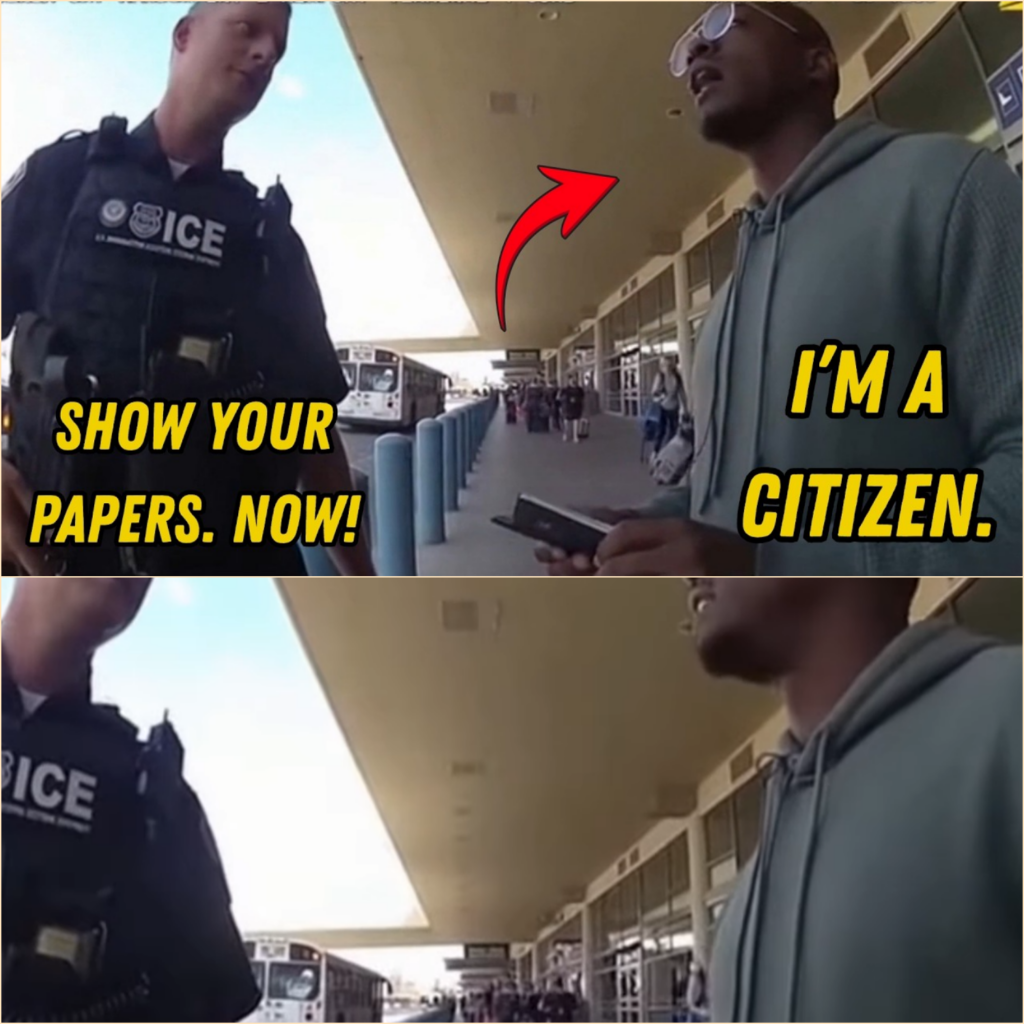

part2 ICE Agents Profile Black Veteran at Airport Shuttle — He’s a U.S. Citizen, $6.5M

ICE Agents Profile Black Veteran at Airport Shuttle — He’s a U.S. Citizen, $6.5M

.

.

.

🇺🇸 Shadows at the Terminal: Part II — The System Beneath the Silence

The settlement was supposed to close the chapter.

For the public, it did.

For the government, it did.

For the headlines, it did.

But for Marcus Holloway, it did something else entirely—it opened a door that should have stayed shut.

In the weeks after the courthouse press conference, life tried to return to normal. Marcus resumed meetings, reviewed logistics contracts, and answered calls from defense partners who now saw him not just as a consultant, but as a man who had stood in the center of a national controversy.

He smiled when necessary. He spoke when required. He worked.

But there were moments—quiet, unguarded moments—when his wrists still remembered the weight of steel.

Not pain.

Memory.

That was worse.

Then the first message arrived.

No sender name.

No subject line.

Just a single sentence:

“That day at Phoenix wasn’t random.”

Marcus deleted it immediately.

He had spent a lifetime learning to distinguish signal from noise. Anonymous messages were noise.

But three days later, another arrived.

“You were selected.”

He didn’t delete this one.

He studied it.

Not fearfully—but the way a soldier studies terrain before realizing the map is wrong.

The third message came with an attachment.

A redacted internal ICE scheduling log.

A single line stood out:

“Target evaluation: Phoenix Sky Harbor—Subject matching behavioral profile flagged for secondary verification.”

Secondary verification.

That phrase did not belong in routine airport screening.

Marcus had seen enough intelligence documentation in his military career to recognize structure when he saw it.

This was not improvisation.

This was procedure.

And procedure meant repetition.

He forwarded everything to his attorney.

Within forty-eight hours, a private investigative team was assembled.

Former federal analysts. Military intelligence veterans. Data specialists.

What they uncovered in the following days changed the entire direction of the case.

Marcus Holloway had not been randomly selected.

He had been pre-flagged.

Not by name.

But by algorithm.

1. The Pattern Hidden in Data

The system had a name buried deep in procurement documentation:

Behavioral Risk Interdiction Matrix (BRIM).

It was not publicly advertised.

It was not widely discussed.

But it existed—designed to identify “anomalous traveler behavior patterns” in high-traffic transit hubs.

On paper, it sounded neutral.

In practice, it was anything but.

The algorithm weighted dozens of variables:

Clothing formality relative to travel context

Lack of visible luggage volume

Travel route irregularities

Demographic statistical deviation from baseline airport population

“Composure under surveillance conditions”

That last variable was the most dangerous.

Because it did not measure threat.

It measured confidence.

And confidence, in statistical modeling, can be misclassified as concealment.

Marcus Holloway—retired military officer, logistics executive, traveling with minimal luggage for a same-day business return—fit the profile almost perfectly.

Not because he was suspicious.

Because he was efficient.

And efficiency, when misread through flawed models, becomes deviation.

Deviation becomes risk.

Risk becomes target.

2. The Agent Who Believed the Machine

Derek Henderson had not acted alone.

That was the first uncomfortable truth.

He had acted in alignment with a system that quietly reinforced his instincts.

When Henderson flagged Marcus visually, the system had already placed a low-level advisory marker on the subject based on pre-arrival data.

It did not say “detain.”

It said:

“Recommend secondary contact if opportunity presents.”

Those words mattered.

They did not instruct aggression.

They permitted it.

Henderson had simply filled in the gap.

Agent Miller, when interviewed later, described it differently.

“We were told to trust behavioral indicators,” he said. “Not override them.”

Trust.

Not verify.

That distinction would become central.

Because what Henderson interpreted as intuition was actually confirmation bias amplified by institutional design.

He did not invent suspicion.

He inherited it.

3. The Man Behind the Flagged Profile

Marcus reviewed the file again.

This time slowly.

Not as a victim.

But as someone reading intelligence on an enemy network.

Except the enemy, in this case, was structural.

The report included dozens of prior “high-confidence behavioral flags” issued at major airports across the country.

Phoenix. Dallas. Atlanta. Chicago.

A pattern emerged.

The majority of flagged individuals were not criminals.

They were:

Veterans

Military contractors

Federal employees

High-frequency business travelers

Individuals with minimal luggage profiles

The system was not detecting crime.

It was detecting deviation from statistical average behavior.

And statistical average behavior was heavily shaped by demographic bias.

The result was predictable, even if unintentional:

Certain groups triggered alerts more frequently—not because they were more dangerous, but because they were less represented in baseline modeling.

Marcus leaned back in his chair.

He had fought enemies who wore uniforms.

He had trained against adversaries who hid in mountains.

But this?

This was different.

This enemy did not announce itself.

It hid inside optimization.

4. The Second Investigation Begins

A federal oversight inquiry was quietly opened.

Not publicly announced.

Not headline-worthy.

At first.

The investigator assigned was Special Counsel Dana Whitmore, a career attorney known for dismantling internal misuse of surveillance systems.

When she met Marcus, she did not begin with sympathy.

She began with questions.

“Did Agent Henderson use excessive force?”

“Yes.”

“Did he violate protocol?”

“Yes.”

“Do you believe he acted with intent to discriminate?”

Marcus paused.

“No,” he said carefully. “I believe he acted with permission.”

That answer changed everything.

Because intent implied a single bad actor.

Permission implied design.

5. The Architecture of Misinterpretation

Whitmore’s team obtained internal training documentation for ICE field agents operating under BRIM-assisted advisories.

One slide read:

“When behavioral deviation is present, prioritize engagement to resolve uncertainty.”

Another:

“Subjects exhibiting controlled affect may indicate concealment of intent.”

A third:

“Composure should not be mistaken for innocence.”

Marcus read those lines twice.

Then a third time.

He understood what they had built.

They had constructed a system where calmness could be interpreted as guilt.

Where discipline could be mistaken for deception.

Where professionalism became suspicious simply because it was statistically uncommon in certain datasets.

It was not explicitly biased.

It was mathematically distorted.

And that made it more dangerous.

Because no one had to hate anyone for harm to occur.

They only had to trust the model.

6. Henderson’s Testimony

Derek Henderson was placed under administrative review.

He agreed to testify.

When asked why he escalated the encounter, he answered without hesitation.

“The system flagged him.”

Whitmore leaned forward.

“The system recommended contact, not detention.”

Henderson nodded.

“But it didn’t say don’t act.”

Silence filled the room.

That sentence would later become the most cited line in the entire investigation.

It revealed the core failure.

Ambiguity.

Not instruction.

Not policy.

Ambiguity dressed as authority.

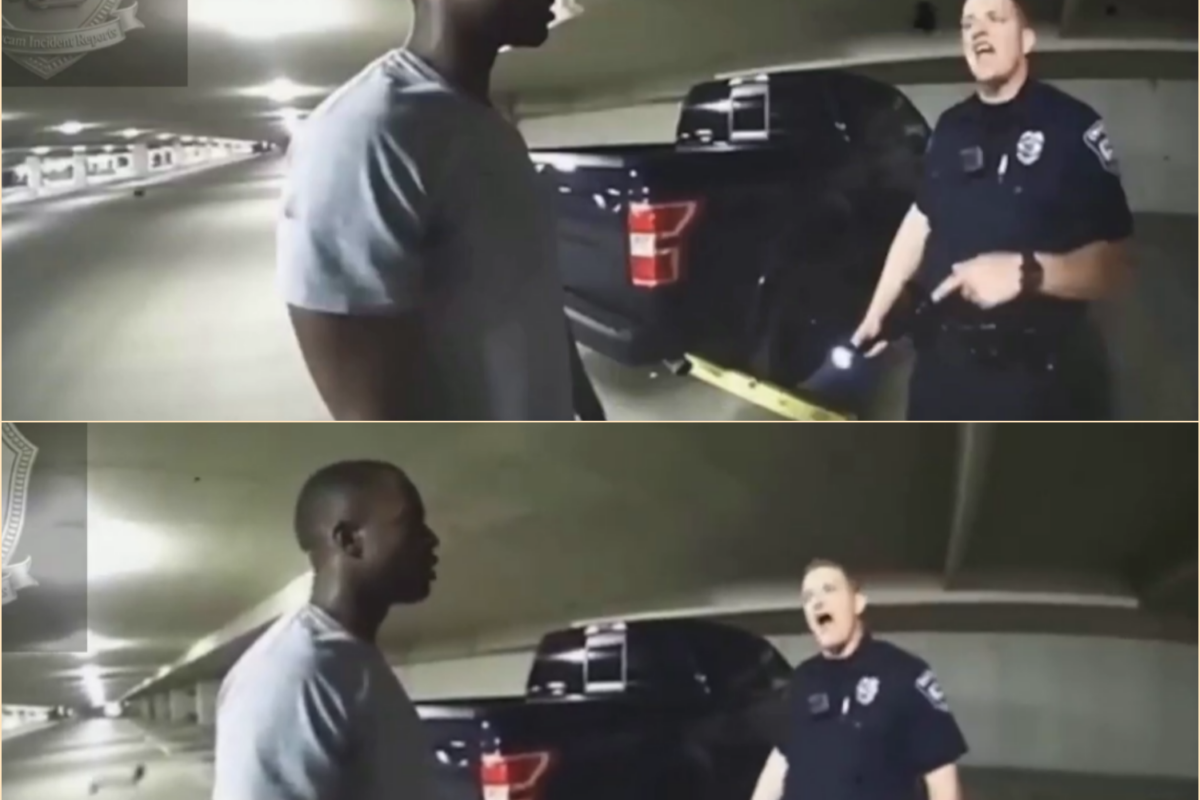

7. The Moment Before the Cuffs

Marcus requested access to surveillance footage from the airport.

He wanted to see himself as others saw him.

Not for validation.

For clarity.

When the video was played in the secure review room, no one spoke.

It showed everything:

The calm stance.

The stillness.

The approach of agents.

The escalation.

The moment of restraint.

And then—his voice.

Measured.

Controlled.

Unafraid.

One analyst whispered:

“He never resisted.”

Another replied:

“He didn’t need to. They already decided.”

That was the most unsettling realization.

The decision had not been made at the moment of arrest.

It had been made earlier—by data, by assumption, by system design.

The encounter was simply execution.

8. The System Responds

As the investigation expanded, internal resistance emerged.

Some officials defended BRIM as a “necessary modernization of threat detection.”

Others quietly admitted flaws.

But the most revealing response came from a leaked internal memo:

“Eliminating behavioral screening is not operationally feasible. Risk of missed threats outweighs individual misclassification concerns.”

That sentence reframed everything.

It was no longer about Marcus.

It was about acceptable error.

The system had decided that some innocent people would be harmed—statistically speaking—because preventing all risk was impossible.

Marcus read the memo once.

Then closed it.

Because he understood something fundamental:

They had not failed to prevent injustice.

They had accepted it as cost.

9. The Confrontation

Whitmore requested a final meeting with Marcus.

“This will not go to trial,” she said.

Marcus looked at her.

“Why not?”

“Because if it does,” she replied, “we will have to defend the system publicly. And we cannot.”

Silence.

Then Marcus asked the only question that mattered.

“How many others?”

Whitmore did not answer immediately.

Then:

“More than you.”

That was enough.

10. The Choice

Marcus was offered something unusual.

A settlement extension.

Additional compensation.

A private apology.

And a request for confidentiality.

In exchange for silence.

He declined immediately.

Not angrily.

Not emotionally.

Simply.

“No.”

Whitmore nodded.

“I didn’t think you would accept.”

Marcus stood.

“This isn’t about me anymore.”

11. Aftermath of Exposure

The internal report was partially leaked months later.

Not fully released.

Partially contained.

But enough surfaced to ignite public scrutiny again.

BRIM was suspended pending review.

Training protocols were rewritten.

Several officials reassigned.

But no one called it what it was.

Not publicly.

Not officially.

A systemic failure of interpretation layered over automated suspicion.

Instead, they called it:

“Algorithmic adjustment.”

A softer phrase.

Less honest.

More comfortable.

12. Marcus Alone

Months later, Marcus returned to Phoenix Sky Harbor.

Not as a passenger.

Not as a subject.

But as himself.

He stood near the same curb.

The same sun.

The same noise.

A shuttle bus pulled in.

Doors opened.

People stepped out.

He watched quietly.

There were no agents this time.

No cuffs.

No confrontation.

Only memory.

And understanding.

Because now he knew something that could not be unlearned:

He had not been mistaken for a threat.

He had been selected by a system that did not understand the difference between deviation and danger.

And systems do not feel guilt.

Only people do.

Closing Thread

The case of Marcus Holloway was eventually archived.

Reclassified.

Referenced in policy discussions.

Cited in reform proposals.

But never fully resolved.

Because the real question it left behind was not legal.

It was philosophical:

When does safety become suspicion?

And what happens when a system designed to protect a nation begins to confuse discipline for deception?

Marcus never stopped asking that question.

Neither did anyone who watched the video.

And somewhere in the architecture of airports, databases, and algorithms, the same logic still exists—waiting for the next calm man at the curb… to be misread again.

News

PART 2 “SHOW US YOUR PAPERS”—THEY HANDCUFFED A BLACK WAR HERO IN BROAD DAYLIGHT, AND IT COST THE GOVERNMENT $7.6 MILLION

PART 2: THE AFTERMATH—HOW ONE ILLEGAL STOP REVERBERATED THROUGH A NATION In the weeks following the verdict, the footage did not fade into obscurity. It spread. At first, it circulated…

PART 2: WHEN POWER TRIES TO HIDE, THE SYSTEM STARTS TO BREAK

🇺🇸 PART 2: WHEN POWER TRIES TO HIDE, THE SYSTEM STARTS TO BREAK The Crestwood Boulevard case was never supposed to become what it became. In the early internal briefings,…

PART 2: “SHE SAVES HEARTS BUT CAN’T STAND IN HER OWN DRIVEWAY? — Cop’s Racist Power Trip EXPLODES Into $2.9 MILLION Nightmare That DESTROYED His Career!”

PART 2: “SHE SAVES HEARTS BUT CAN’T STAND IN HER OWN DRIVEWAY? — Cop’s Racist Power Trip EXPLODES Into $2.9 MILLION Nightmare That DESTROYED His Career!” PART 2: “SHE SAVES HEARTS…

PART 2: “CUFFS, LIES, AND A CHILD’S TEARS: Racist Cop Targets 9-Year-Old Girl—Then Reality CRUSHES Her Career in Seconds”

PART 2: “CUFFS, LIES, AND A CHILD’S TEARS: Racist Cop Targets 9-Year-Old Girl—Then Reality CRUSHES Her Career in Seconds” PART 2: “CUFFS, LIES, AND A CHILD’S TEARS: Racist Cop Targets 9-Year-Old…

part 2 Racist Cop Arrests Black Man Buying Groceries – He’s a Civil Rights Attorney, Wins $4.7M

Racist Cop Arrests Black Man Buying Groceries – He’s a Civil Rights Attorney, Wins $4.7M . . . 🇺🇸 PART 2: INSIDE THE SYSTEM — THE HIDDEN PATTERN BEHIND WRONGFUL…

PART 2 “They Didn’t Expect This” — A Simple Encounter Leads to a Major Outcome

“They Didn’t Expect This” — A Simple Encounter Leads to a Major Outcome . 🇺🇸 They Didn’t Expect This — Part 2: What Justice Couldn’t Heal The verdict echoed far…

End of content

No more pages to load